ONTOGUARD AI

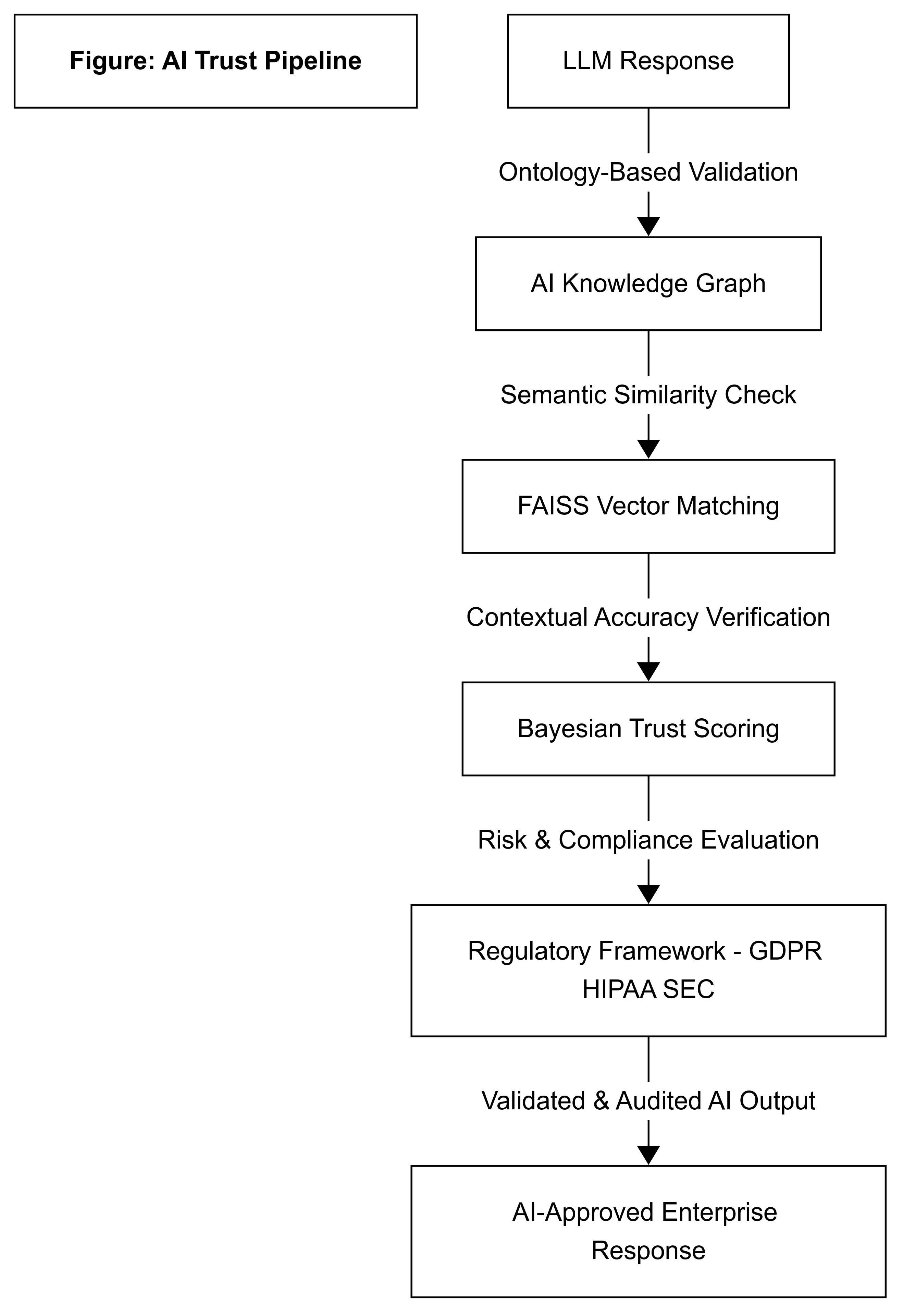

The Compliance & Trust Plugin for Large Language Models

A lightweight overlay that wraps around your LLM — delivering real-time trust scoring, symbolic validation, and legal compliance. No retraining. No rewriting. Just plug it in.

A lightweight overlay that wraps around your LLM — delivering real-time trust scoring, symbolic validation, and legal compliance. No retraining. No rewriting. Just plug it in.

A large language model analyzing regulatory disclosures flagged three outputs as high-risk for SEC violations.

OntoGuard AI automatically tagged, symbolically traced, and scored each instance — all in under 50ms — enabling real-time auditor review before filing.

This prevented potential reporting infractions without requiring retraining or model slowdown.

📄 NDA Access Request

📄 NDA Access Request

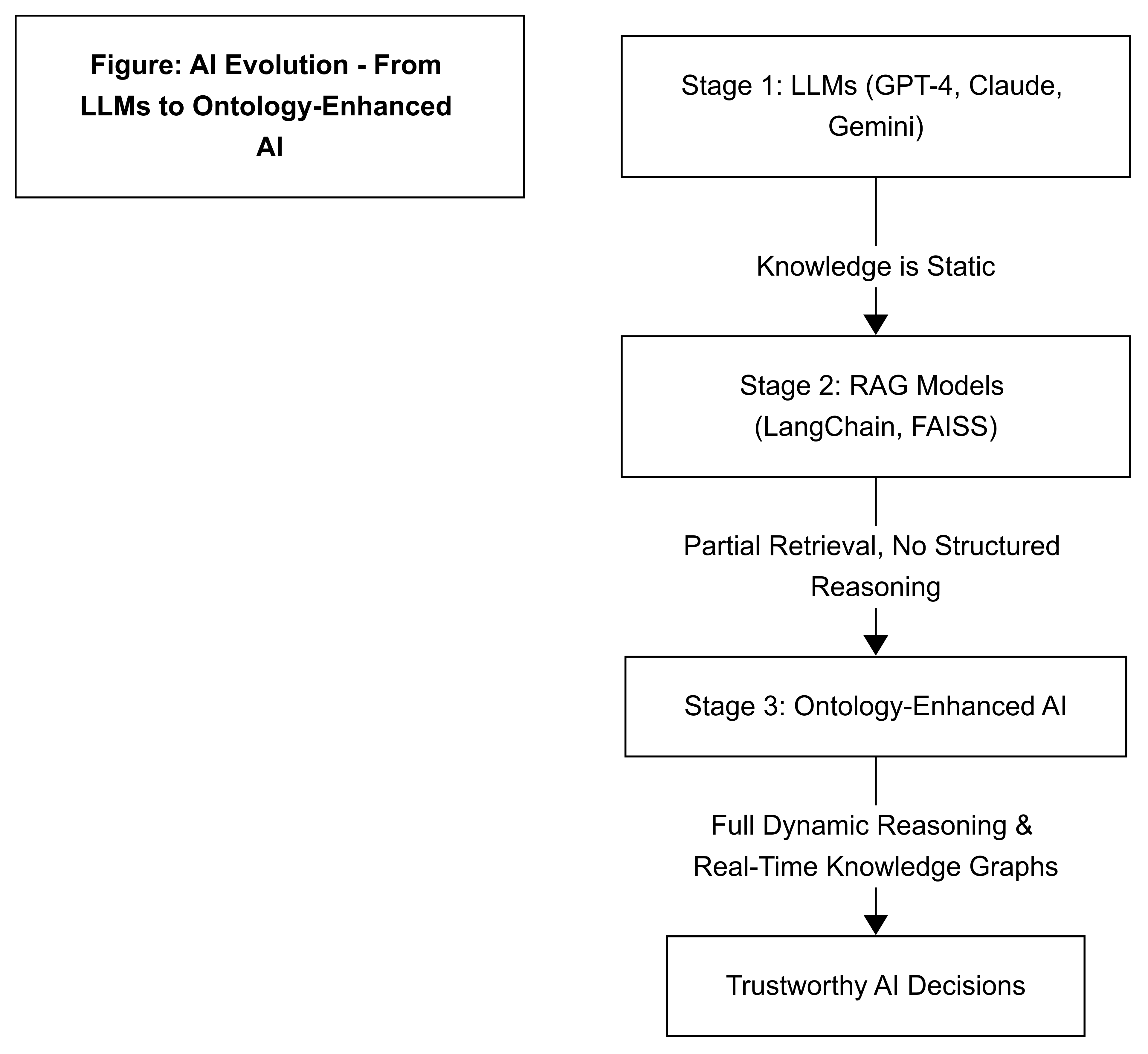

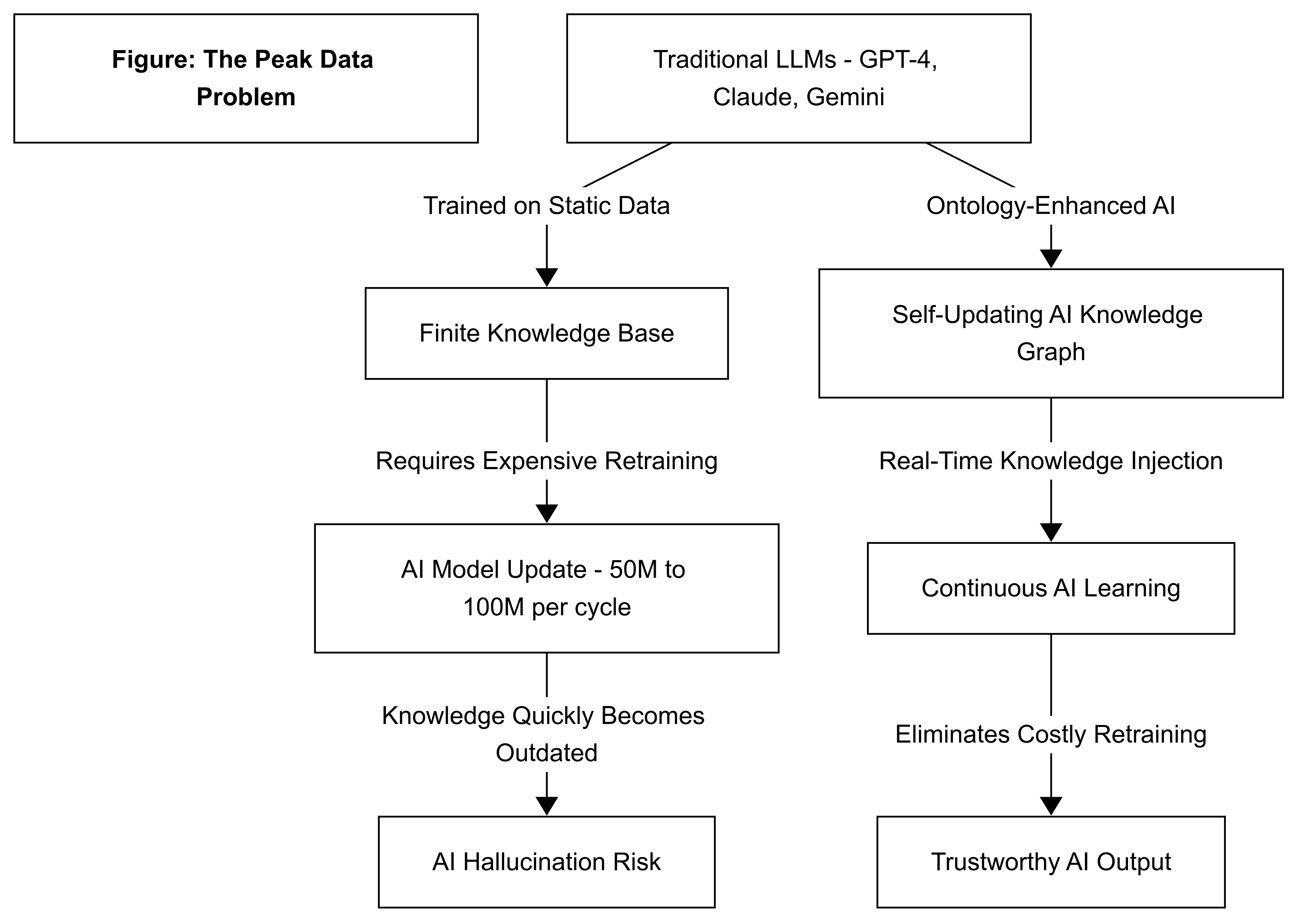

Our patent-pending system weaves dynamic knowledge with validation, ensuring compliance and trust.

Full technical details available under NDA.

Explore the live demo or reach out for a deeper strategic discussion under NDA.

We'll respond with NDA terms and provide access to private demo assets, integration materials, and partner briefings.