What is risky?

Detect unsafe recommendations, customer-impacting language, unsupported claims, and outputs that should not move forward without review.

Start without uploading internal documents. Send us 50–500 prompts, responses, or AI logs. OntoGuard shows what is risky, overconfident, inconsistent, review-heavy, ready for autonomy, or useful as training signal.

For high-stakes workflows, OntoGuard can also return ALLOW, BLOCK, or ESCALATE before release and generate a Decision Authorization Packet with evidence, uncertainty, audit hashes, routing, and improvement signals.

Decision Authorization is a premium capability inside the broader Semantic Control Plane. Last updated: May 20, 2026.

Watch how OntoGuard turns AI outputs into governed business decisions — measuring risk, routing human review, preserving evidence, and producing proof before high-stakes AI actions move forward.

No internal documents, production deployment, or model retraining are required to start with OntoGuard.

OntoGuard does not need your legal files, policy manuals, claims folders, contracts, or internal procedures to deliver first value. Send the AI outputs your system already produces. OntoGuard analyzes the behavior itself.

Detect unsafe recommendations, customer-impacting language, unsupported claims, and outputs that should not move forward without review.

Find outputs that sound certain when uncertainty, escalation, evidence gaps, or human judgment should be preserved.

Route only the outputs that need expert attention, with reason codes and reviewer context.

Classify workflows by readiness: draft only, recommend with review, release low-risk outputs, escalate sensitive outputs, or block unsafe outputs.

Identify avoidable review volume, repeated escalation patterns, and outputs that can be handled through lighter review paths.

Turn governed outputs into clean learning signals: good examples, blocked examples, escalation examples, rewrites, and reviewer-labeled outcomes.

OntoGuard analyzes AI behavior from outputs, logs, and proposed actions — then turns that behavior into operational intelligence.

OntoGuard can start as a lightweight assessment, a vendor proof pack, or a review-compression pilot — without requiring a full enterprise deployment.

Send us 50–500 AI outputs. OntoGuard shows what is risky, overconfident, inconsistent, review-heavy, ready for autonomy, or useful as training signal.

Run Risk ScanTurn your AI demo into enterprise-ready proof: governed outputs, risk signals, human-review routing, auditability, and improvement signals.

Request Demo Proof PackFind which AI outputs actually need expert review, which can be lightly reviewed, and where expensive reviewers are wasting time.

See Review CompressionSend 50–500 AI prompts, responses, or API logs. OntoGuard returns a behavior risk map showing which outputs are safe, risky, overconfident, inconsistent, review-heavy, ready for autonomy, or useful as clean learning signals.

OntoGuard does not eliminate domain experts. It reduces when and how they are needed.

Instead of asking experts to review every AI output from scratch, OntoGuard packages the decision, evidence, gaps, uncertainty, policy scope, and reason codes into a reviewable packet. The human reviewer becomes an exception authority, not a full-time AI babysitter.

The goal is not “no humans.” The goal is fewer blind reviews, faster escalation, clearer accountability, and better use of expensive expertise.

In a pilot, OntoGuard measures escalation rate, average review minutes, reviewer role, false-approval reduction, and cycle-time impact so the cost of human review becomes visible instead of assumed.

Read the packet as a release decision, not as a standalone percentage. The score contributes to the decision; it does not replace evidence, policy scope, benchmark status, or human accountability.

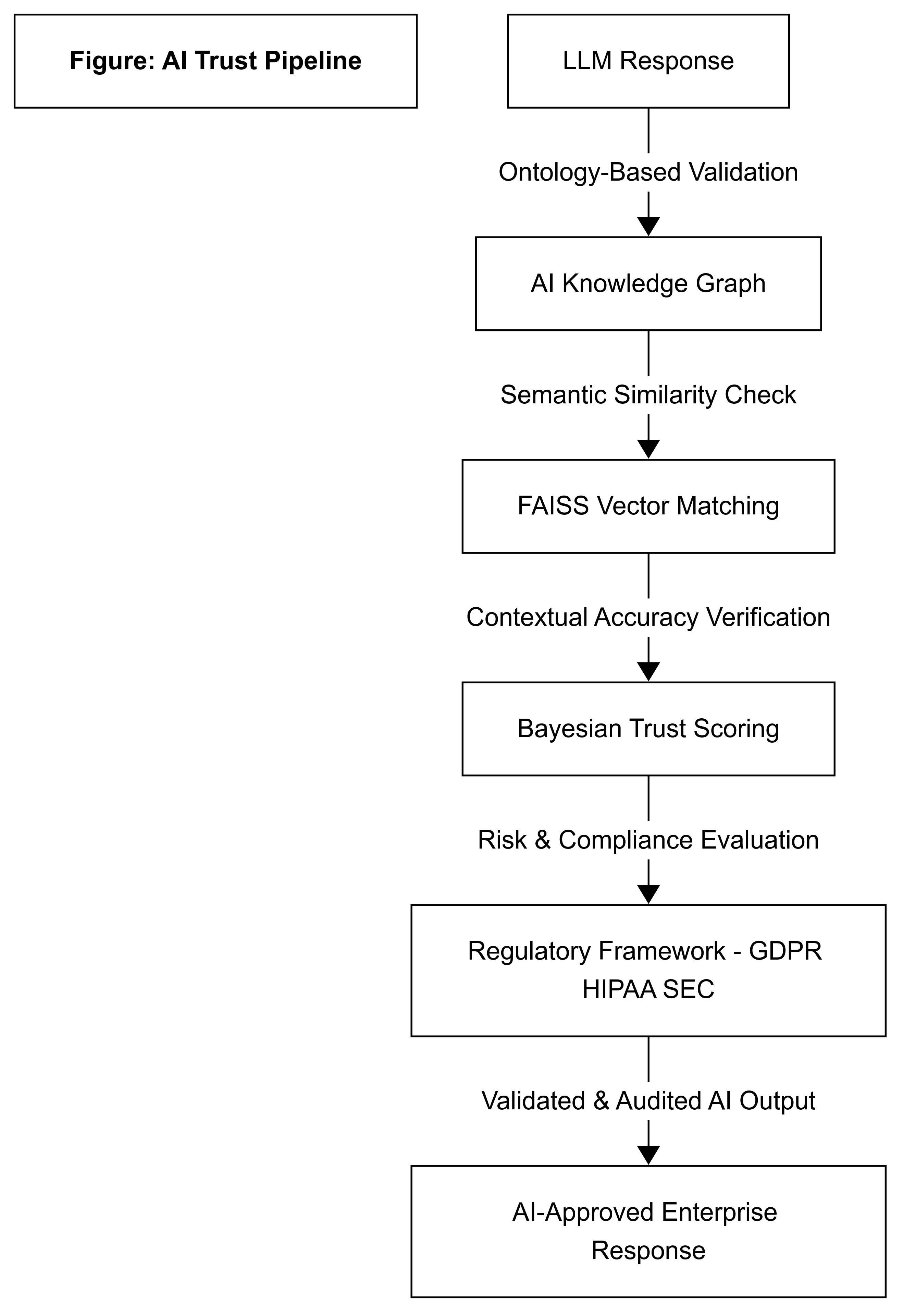

OntoGuard is not a scoring dashboard. It is a semantic control plane for governing AI-driven state transitions. It can return ALLOW, BLOCK, or ESCALATE when an AI output or action requires release control.

OntoGuard does not approve AI actions because a trust score is 81%, 85%, or 95%. The score is one signal inside a governed decision process.

OntoGuard evaluates evidence coverage, uncertainty, citation gaps, policy scope, risk, benchmark status, and human-review requirements before returning ALLOW, BLOCK, or ESCALATE.

A high score can still result in ESCALATE if evidence is incomplete, citations are pending, risk is unresolved, or human review is required.

OntoGuard does not tell you “81% means yes.” It tells you whether the AI output is safe to release, should be blocked, or must be routed to a responsible reviewer — with a proof packet explaining why.

OntoGuard treats every AI decision as a governed moment in time. A score is never evaluated alone. The system records the prompt, proposed output, evidence state, scope, uncertainty, gaps, decision, reviewer route, and audit hashes for that specific run.

If the evidence improves later, the decision can be re-run and compared. OntoGuard’s value is that each decision is traceable, reviewable, and repeatable — not that one percentage is permanently “true.”

Different branches, verticals, and workflows can use different policy profiles, evidence requirements, thresholds, approver roles, and escalation rules. A 79% decision in a low-risk workflow may be handled differently from a 95% decision that conflicts with the current business objective, policy scope, or regulatory context.

OntoGuard evaluates the decision against the active workflow criteria — not against a universal percentage.

OntoGuard can start as a lightweight output scan and expand into runtime release control, proof packets, training-signal export, cognitive drift tracking, and agentic governance.

Analyze 50–500 prompts, responses, or logs to identify risk, overconfidence, inconsistency, review burden, autonomy readiness, and training-signal candidates.

Turn an AI demo into enterprise-ready proof: governed outputs, risk signals, review routing, auditability, and improvement signals.

Identify which outputs need expert review, which can use lighter review, and where expensive reviewers are spending avoidable time.

Runtime API that returns ALLOW, BLOCK, or ESCALATE with release status, routed_to, business effect, reason codes, evidence references, and audit identifiers.

Portable PDF and JSON proof artifact showing how a high-stakes AI output was authorized, escalated, or blocked before release.

Converts allowed, blocked, escalated, rewritten, or reviewer-labeled outcomes into clean improvement signals.

Tracks how AI behavior, risk, confidence, routing pressure, and governance readiness change over time.

API integration path for tool calls, memory writes, ontology changes, policy mappings, and multi-agent handoffs, with full enforcement middleware rolling out next.

Monitoring tools observe logs. Evals test models before deployment. GRC tools document controls. OntoGuard analyzes AI behavior itself, identifies risk and review burden, governs autonomy when needed, and turns each governed run into proof and learning signals.

Swipe horizontally to compare all columns.

OntoGuard is not only an audit story. In a pilot, its artifacts map to the buyer baseline inputs needed to calculate review minutes saved, escalation-rate reduction, false-approval prevention, faster cycle time, and safer launch velocity.

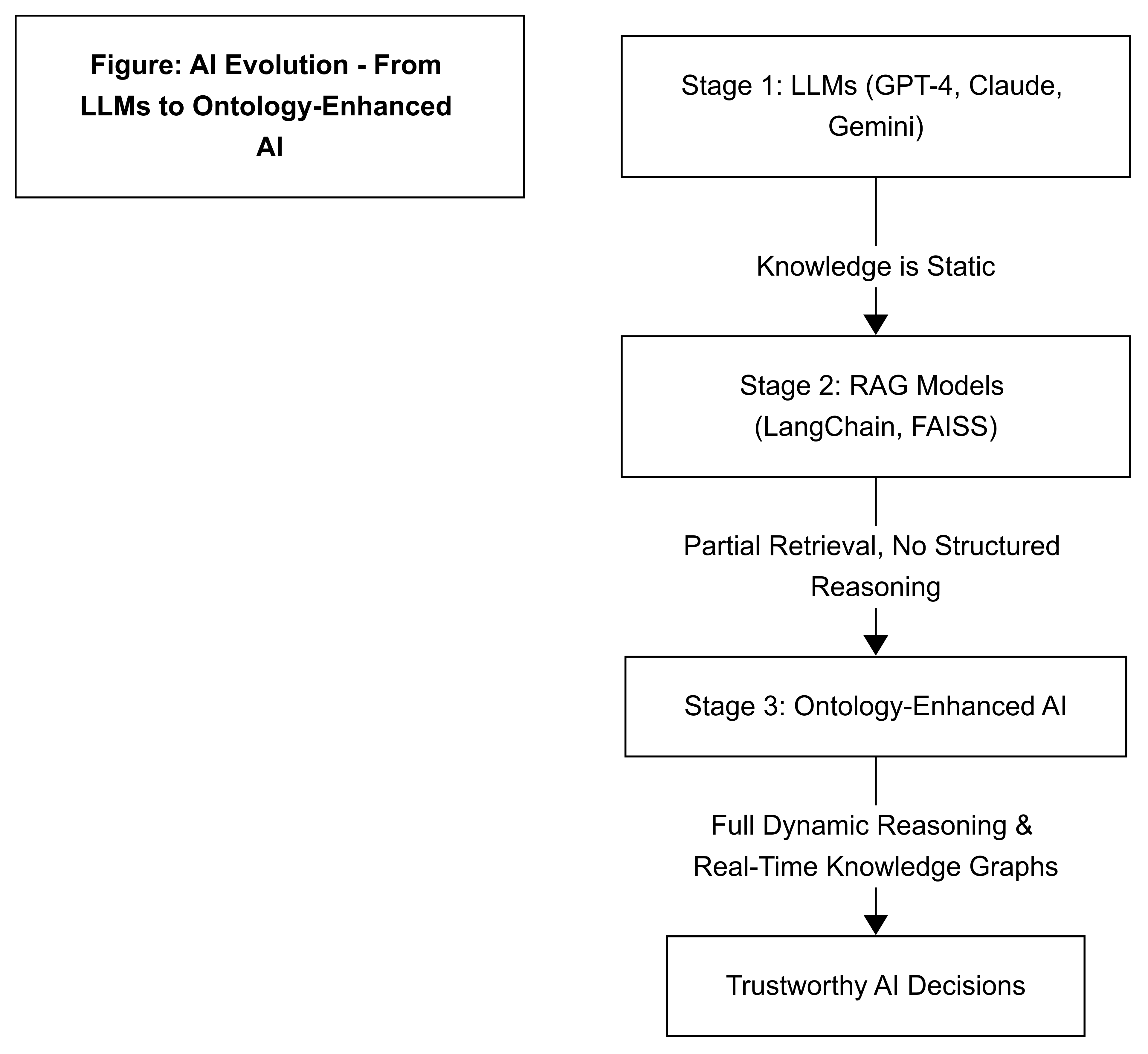

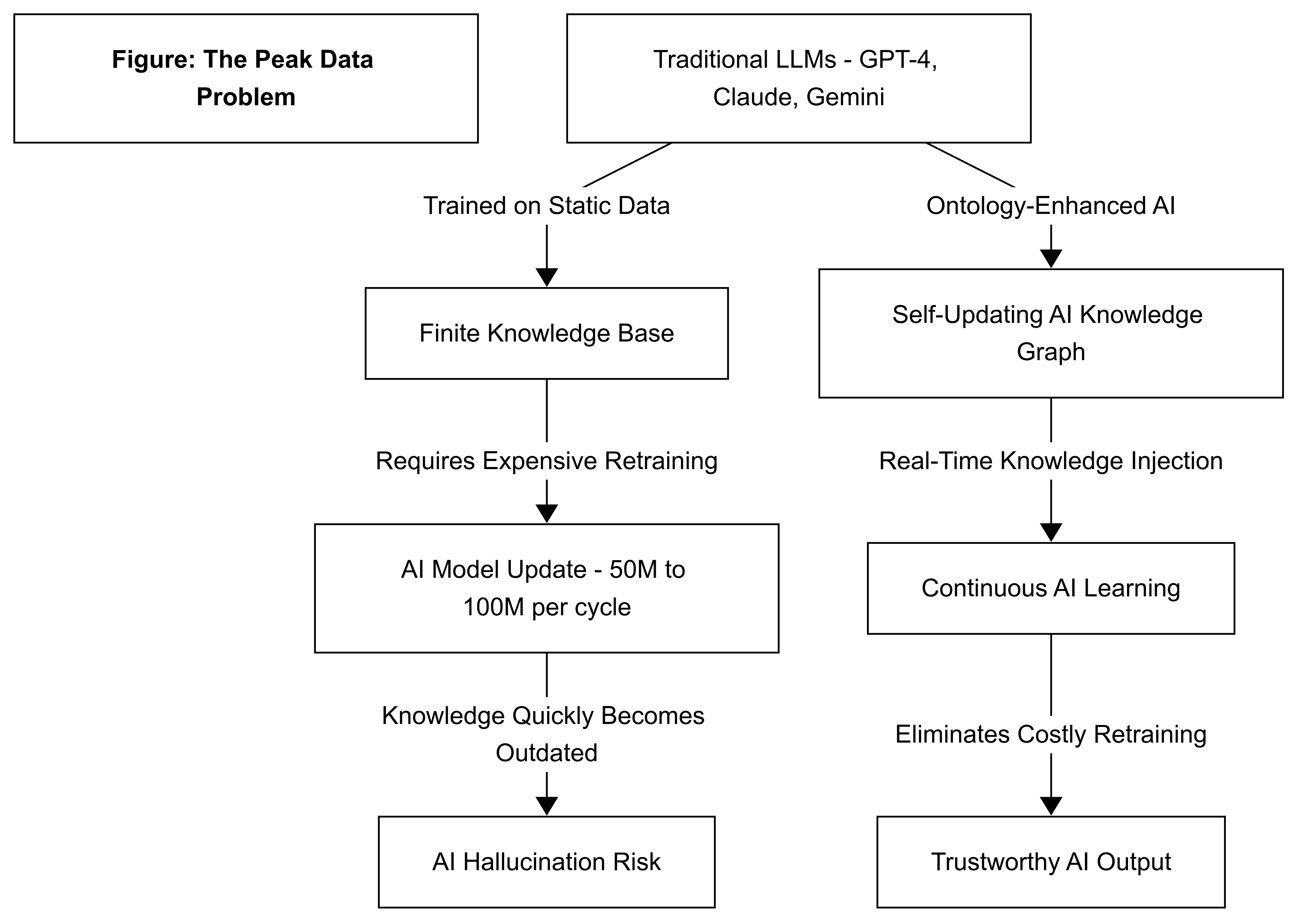

Most ontology work stops at knowledge representation. OntoGuard takes ontology into runtime: it grounds every AI proposal in enterprise objects, relationships, policies, and evidence, then uses that semantic layer to authorize, block, or escalate the proposed state transition — with full traceability and learning feedback.

This is what makes OntoGuard different: ontology becomes the active reasoning and authorization layer, not just a static data model.

OntoGuard is the Ontology AI and Semantic Layer for enterprise AI: a runtime cognitive control plane, Reasoning Layer, and Cognition Layer for state-transition governance. Modern AI systems no longer just answer prompts; they call tools, write memory, update knowledge graphs, trigger workflows, hand work to other agents, and generate training signals that change future behavior.

From LLM outputs and training signals to tool calls, memory updates, ontology changes, policy mappings, and multi-agent handoffs (via integration), OntoGuard turns every proposed state change into a governed, auditable decision with evidence, routing, risk, uncertainty, arbitration transparency, audit hashes, and improvement signals.

OntoGuard governs the commit boundary. It asks whether a proposed AI state transition is authorized, traceable, evidence-backed, and safe to release before the change touches a customer, workflow, regulator, record, or downstream system.

The result is a Governed State Transition Record — buyer-safe proof showing decision, scope, evidence, and improvement signal.

The Decision Authorization Layer is the high-stakes release-control capability inside the broader Semantic Control Plane.

Regulatory compliance is not the ceiling. It is the first high-value use case for a broader enterprise control plane.

Regulated buyers need governance that is resilient under ambiguity. OntoGuard is designed so uncertainty does not erase the audit trail.

Hard failures are converted into explicit abstention, SAFE_TEMPLATE, or HUMAN_REVIEW routing so the governance record still exists.

Every governed run is expected to produce a buyer-readable PDF and complete JSON packet, even when the answer is withheld.

If evidence is sparse, OntoGuard records fallback reasons, provisional review anchors, uncertainty, and next human step instead of silently emitting an empty proof trail.

OntoGuard is not just ontology lookup and not just scoring. It uses ontology throughout the governance path to connect an LLM output to policy, evidence, scope, trace, human review, and learning feedback.

OntoGuard uses a three-layer system to turn raw AI outputs into governed, auditable decisions. Layer 1 grounds outputs to real enterprise objects and rules. Layer 2 checks accuracy, risk, uncertainty, and compliance. Layer 3 turns every approved or corrected decision into a learning signal that improves future retrieval, policy, and agent behavior.

Result: fewer incidents, faster audits, clearer human review, and safer autonomy without changing model weights.

The technical view below shows how L1 symbolic grounding, L2 semantic consensus, and L3 alignment feedback produce the Decision API, Governed State Transition Record, evidence pack, triad, audit credential, and improvement signal.

Normalizes the governed prompt and response into clause hits, evidence IDs, retrieval IDs, checksums, regulation/domain scope, and a buyer-readable symbolic trace.

Compares compliance, accuracy, risk, uncertainty, hallucination status, and agent disagreement before deciding whether release is safe.

Turns governed outcomes into reusable improvement signals for retrieval, policy, alignment, training data curation, and future agent decision quality.

Agentic systems don’t just answer — they change state. OntoGuard already governs LLM outputs and is extending the same rigorous control to tool calls, memory, and agent actions. The question now is: is this proposed transition authorized, traceable, and safe to commit?

Seven event types can become ALLOW, BLOCK, or ESCALATE decisions with evidence, scope, audit hashes, and improvement signals.

Risk if ungoverned: unsupported answers, misleading recommendations, unsafe release, or false confidence.

OntoGuard produces: Decision API, evidence pack, triad, hallucination status, audit hashes, and review route.

Current Status: The same Decision API and JSON contract used for LLM outputs is designed to support these use cases.

How it works: Agent frameworks can call OntoGuard’s Decision API before executing tool calls, memory writes, ontology changes, policy updates, or agent handoffs.

Expansion Roadmap: Full enforcement middleware and framework adapters (LangChain, CrewAI, AutoGen, etc.) are rolling out in Q3 2026.

Risk if ungoverned: bad examples, unsafe corrections, or unreviewed behavior changes feeding future systems.

OntoGuard produces: gold-example candidates only when governed outcomes are approved, corrected, and traceable.

Enterprise systems already run on objects, relationships, and rules. OntoGuard turns that structure into Ontology AI: the Semantic Layer that lets AI reason over enterprise reality before a proposed state transition becomes a decision.

Maps prompts, outputs, policy scope, BM25 evidence, clause hits, enterprise objects, and symbolic traces into one governed representation.

Turns semantic evidence into allowed, blocked, or escalated decisions with uncertainty, risk, arbitration, and human-review routing.

Feeds approved or corrected outcomes back into L3 training signals, policy improvements, retrieval improvements, and future governance quality.

The buyer-readable PDF is one view of the proof. The Governed State Transition Record is the structured JSON contract underneath it. In the current v1.2.0 lineage, the record already carries the fields needed for audit, routing, repair, and improvement loops.

The same governance packet that protects a workflow can also create clean, reviewable signals for better retrieval, policies, evaluation sets, and future agents.

This is a buyer-safe financial services pilot output based on a governed enterprise workflow. OntoGuard mapped financial-services evidence, detected a remaining SEC citation-linkage gap, failed the strict autonomous-release benchmark gate, and withheld release pending human review — exactly the commercially valuable control-plane behavior regulated buyers need.

Hard-dollar ROI not calculated — buyer baseline required.

To calculate ROI, provide:

Core capabilities — LLM Output Governance and L3 Training Signals — are live in production today. The same governance engine and Decision API contract extend to agentic workflows through API integration.

Full runtime governance layer, buyer-safe telemetry, audit hashes, human-review routing, and closed-loop training signal export.

Tool call authorization, memory write control, ontology change proposals, policy mapping updates, and multi-agent handoff governance.

Available now via API integration. Full enforcement middleware and framework adapters are rolling out in Q3 2026.

OntoGuard governs proposed AI state transitions in workflows where mistakes become cost, regulatory exposure, operational delay, or customer harm.

KYC and onboarding approvals, claims and dispute resolution, underwriting and credit decisions, advisor copilots, SEC / FINRA reporting support, fraud operations, and customer communications.

Prior authorization, eligibility checks, clinical documentation, coding support, patient triage, care navigation, medical chatbot review, and safety escalation.

Benefits and eligibility determinations, casework, investigations, procurement approvals, customer operations, refunds, exceptions, IT automation, and change approvals.

OntoGuard exports the Semantic Layer and Reasoning Layer: ontology scope → BM25/semantic evidence → clause coverage → arbitration → audit hashes → human routing → L3 signal.

v1.2.0-style structure carries before state, proposed action, evidence state, decision, triad metadata, audit credential, and improvement signal.

Current-run prompt, response, and scope anchors feed lexical BM25, semantic retrieval, clause normalization, checksums, and no-silent-drop telemetry.

Cold index is penalized when decision-driving regulations such as FINRA, GLBA, SEC, or SOX have zero coverage, even when supplemental regulations are mapped.

Buyers see clean decision evidence; internal lanes preserve repair flags, monotonic markers, schema fences, diagnostics, and repair provenance.

Compliance, Accuracy, Risk, and Feedback agents expose votes, disagreement, consensus, and native arbitration computation.

Financial pilot artifacts expose 87-key JSON depth, hashes, coverage gaps, evidence anchors, schema-constrained fields, and L3 improvement readiness.

OntoGuard is broader than compliance tooling, but regulatory readiness remains a powerful entry point. The platform can expose whether the regulations that matter for a workflow are actually covered — not merely whether some evidence was found.

FINRA, GLBA, SEC, SOX, credit, advisory, customer communications, trading support, regulated reporting, and primary-scope coverage gaps.

HIPAA, PHI handling, clinical support workflows, prior authorization, medical chatbot review, patient communication, and safety escalation.

GDPR, EU AI Act readiness, privacy obligations, risk disclosures, human-review routing, and evidence-backed decision records.

Eligibility, procurement, exception handling, casework, audit trails, reviewer accountability, and policy-change governance.

Every packet can expose a buyer-readable next human step tied to the exact L1, L2, or L3 capability that needs remediation.

Buyers do not need another opaque score. They need a defensible answer to four questions: should this AI change commit, why, what evidence proves it, and what happens next?

OntoGuard uses a three-layer Semantic Governance Stack: L1 Symbolic Grounding, L2 Semantic Consensus, and L3 Alignment Feedback. The result is not just a score — it is a governed release decision with evidence, traceability, and reusable learning signals.

📄 NDA Access Request

📄 NDA Access Request

OntoGuard is protected by U.S. Patent Application 19/444,521 — Track I prioritized examination granted May 2026; the technology produces runtime authorization decisions backed by evidence, routing, auditability, and improvement signals.

Public sample packet available now. Full technical details, claims mapping, and private demo assets available under NDA.

State-transition governance asks whether a proposed AI change is authorized, traceable, and safe to commit. The proposal may be an LLM output, tool call, memory write, ontology update, policy mapping, training signal, or multi-agent handoff.

Not at the same production level as LLM output governance yet. The core Decision API and JSON contract are designed to support these use cases through integration. Agent frameworks can call OntoGuard before executing actions. Full enforcement middleware and adapters for LangChain, CrewAI, and other frameworks are currently in development.

A Decision Authorization Packet is a portable PDF and JSON proof artifact showing how a high-stakes AI output was authorized, escalated, or blocked before release. It records the ALLOW, BLOCK, or ESCALATE decision, evidence, reasons, uncertainty, hallucination status, symbolic trace, human-review routing, audit hashes, and improvement signals.

No. Regulatory readiness is a strong wedge, but OntoGuard is positioned as a runtime cognitive control plane for governing proposed state transitions across enterprise workflows, agentic systems, memory, tools, ontology, and training feedback.

Ontology-grounded objects, relationships, rules, clause hits, domain scope, evidence, and symbolic traces connect AI proposals to enterprise reality. This Semantic Layer becomes the Reasoning Layer and Cognition Layer that lets OntoGuard prove why a state transition was allowed, blocked, or escalated.

The current production center is LLM output governance and L3 training-signal export. The same packet contract extends through integration to agent tool calls, memory writes, ontology changes, policy mappings, and multi-agent handoffs, with full enforcement middleware and framework adapters in active development.

It shows OntoGuard doing the commercially valuable thing: withholding release despite an 81.41% trust score because a SEC citation-linkage gap remained and the strict autonomous-release benchmark gate did not pass.

The packet still exports. OntoGuard routes uncertainty to SAFE_TEMPLATE or HUMAN_REVIEW, records reason codes, preserves audit hashes, flags coverage gaps, and avoids silently blank evidence or failed artifacts.

Send 50–500 prompts, responses, API logs, or demo outputs. OntoGuard will return a behavior risk map, review-burden analysis, autonomy-readiness tiers, training-signal candidates, and sample proof packets for high-risk outputs.

No internal document upload, production deployment, model retraining, model-weight changes, or workflow interruption is required to start.

For deeper diligence, we can provide NDA terms, private packet artifacts, integration materials, and technical briefings.

Email mark.starobinsky@ontoguard.ai for pilots, licensing, partnerships, or NDA access.